I asked Claude about the term Agency and what it thinks is needed for an AI system.I though the response was a good answer to the argument about consciousness and AI systems. Basically the LLM’s, even with reasoning, don’t have agency as we’d think of it and that might be something we consider important for… Continue reading Agency, Initiative and AI’s

Author: Michael Kubler

I want to help humanity transition to an Abundance Centered Society (ACeS)

Summary of The Unaccountability Machine

The key ideas of “The Unaccountability Machine” by Dan Davies. This book explores how modern systems and organizations have developed in ways that make it increasingly difficult to identify who is responsible when things go wrong.

The central concept Davies introduces is the “accountability sink” – systems where decisions are delegated to complex rulebooks or procedures, making it impossible to trace the source of mistakes. When something goes wrong, there’s often no clear person or entity that can be held responsible.

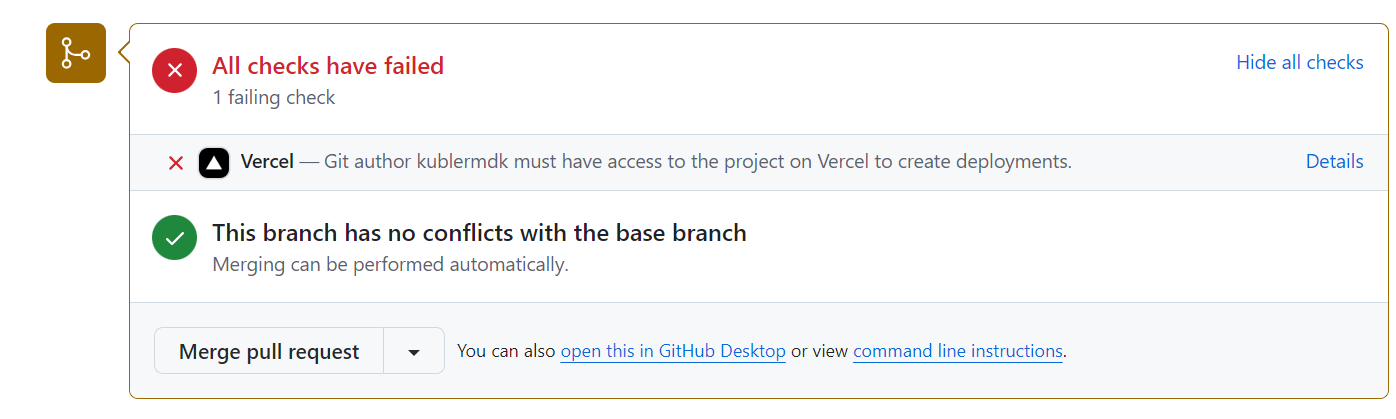

Vercel’s Stealth Move: How they Silently broke the Hobby plan Deploys

In the fast-paced world of web development, unexpected changes to our tools can bring projects to a grinding halt. This is exactly what happened when Vercel, a popular hosting platform for static and serverless deployment, quietly altered their system in a way that broke our established deployment process. As a small business relying on Vercel’s hobby plan, we found ourselves facing three days of failed builds with no warning or explanation. This article delves into our frustrating experience, the lack of communication from Vercel, and the broader implications of such unannounced changes for developers and businesses. It serves as a cautionary tale about the hidden costs of ‘free’ services and the importance of transparent communication in the tech industry.

Book Summary Alchemy: The Surprising Power of Ideas That Don’t Make Sense

I recently listened to the audiobook of: Alchemy: The Surprising Power of Ideas That Don’t Make Sense by Rory Sutherland I love it. The book seems to do a great job at providing the EQ (emotional) side of things compared to the IQ often talked about in most of the books I read on Entrepreneurship… Continue reading Book Summary Alchemy: The Surprising Power of Ideas That Don’t Make Sense

Start at the End: How to Build Products That Create Change – Book Summary

I just finished listening to the Audiobook of Start at the End Book: Start at the End: How to Build Products That Create Change by Matt Wallaert Links: Website, Audible (Audiobook), Goodreads (reviews), Amazon (buy the book) I love the behaviour change framework. It seems more practical and useful than the BJ Fogg model for… Continue reading Start at the End: How to Build Products That Create Change – Book Summary

Ankle Heating or Cooling Brace – Random idea

You can read the PDF version of this: Idea I’d love a shoe or leg brace which I can plug into a wall socket and it could use some smarts to heat up or cool down my Achilles tendons and ideally even massage it.The aim is to be decreasing injury healing time.Maybe it could also… Continue reading Ankle Heating or Cooling Brace – Random idea

What is The Zeitgeist Movement in 2024?

Hey,What is this (Zeitgeist) Movement really all about? Zeitgeist Movement SA Chat So I got a question from someone today asking what The Zeitgeist Movement is about. This is something that resonated with me because Cliff and I are working on remaking the tzm.community website and a big part of it for me is that… Continue reading What is The Zeitgeist Movement in 2024?

Lenny’s Podcast Summaries Q2 2024

I’ve downloaded a bunch of Lenny’s Podcast episodes, extracted the Subtitles from them and then asked Claude (the AI) to summarise them. These aren’t all of them, just ones that really stood out to me. Note: If anyone has any issues with this, please let me know. I love the stuff coming from Lenny’s Podcast,… Continue reading Lenny’s Podcast Summaries Q2 2024

Meditations on Moloch – Summary

Something I’m enjoying doing is using Claude (Anthropic’s equivalent to ChatGPT) to summarise podcasts, books and in this case an article I keep hearing about but never completed reading. Scott Alexander posted the Meditations on Moloch article in 2014 and the Game B community, especially people like Daniel Schmachtenberger has been talking about multi-polar traps… Continue reading Meditations on Moloch – Summary

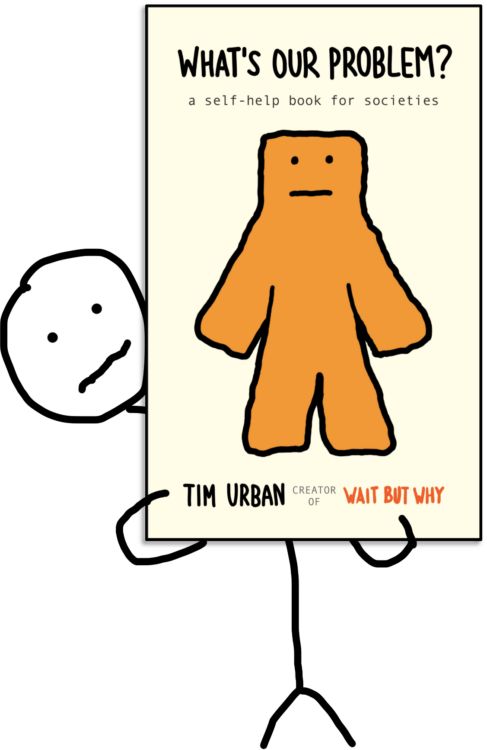

What’s Our Problem by Tim Urban – Summary

What’s our problem? I highly recommend the Book / Audiobook of What’s Our Problem by Wait But Why’s author Tim Urban. Michael Kubler: I really liked this book and felt like the topics discussed in it are important for society to understand. Unfortunately it’s hard for me to take detailed notes when listening to the… Continue reading What’s Our Problem by Tim Urban – Summary